Thus entropy change will be 13.7 j per K. Internal interactions between various subsystems give multiple entropy changes. Entropy is generally defined as the degree of randomness of a macroscopic system.

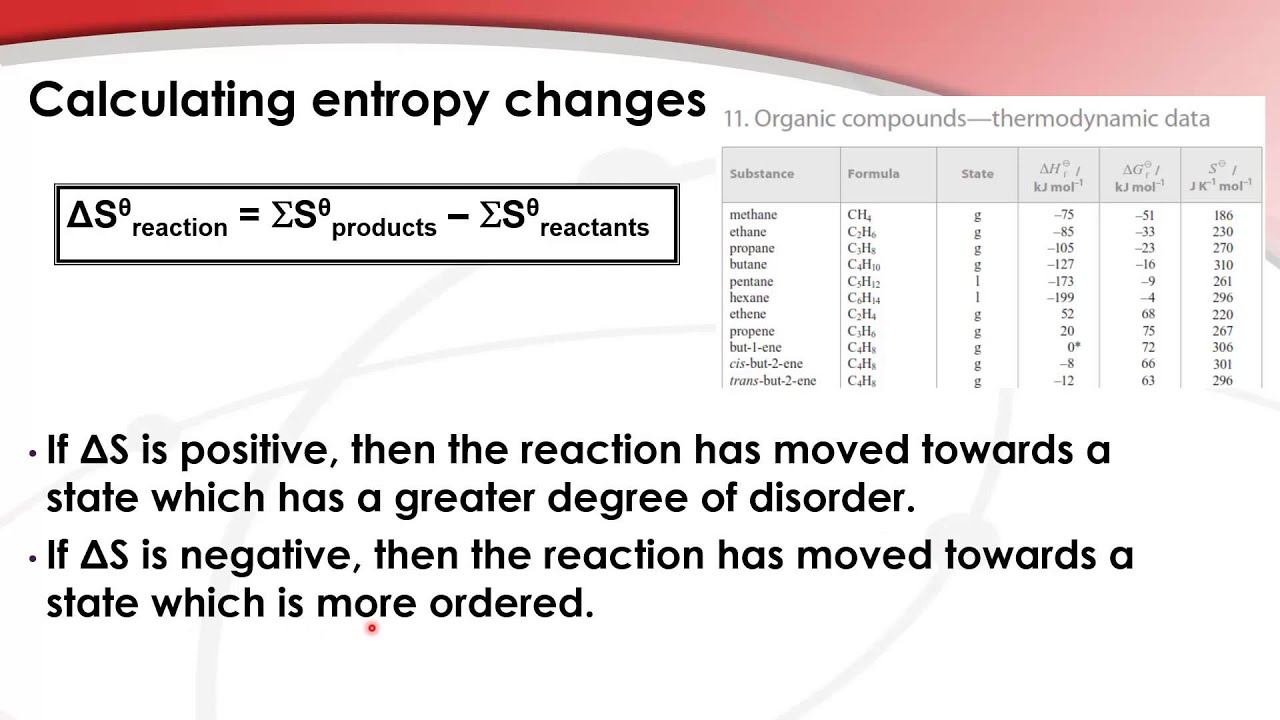

Method-1: If the process is at a constant temperature then: Hence, we define a new state function to explain the spontaneity of a process. We can calculate Entropy using many different equations: Therefore, it is clear that entropy increases with a decrease in regularity. A system at higher temperatures will have greater randomness than a system at a lower temperature. During chemical reactions, if reactants break into more products, then entropy also increases. The much disorder exists in an isolated system, hence entropy also increases. Entropy is an extensive property because it scales with the size or extent of the system.It is a state function, as it depends on the state of the system and not the path which is followed.Scientists have got the conclusion that if a process is to be spontaneous, the entropy of that process must increase. It is represented by S, but in the standard state, it is represented by S°. Thus entropy is a measure of the molecular disorder.įor example, the entropy of a solid, where the particles are not free to move, is always less than the entropy of a gas. On the other hand, the statistical definitions are given in terms of the statistics of the molecular motions of a system. This description will take into consideration the state of equilibrium of the systems. Instead, entropy is used to describe the behavior of thermodynamic system-related terms such as temperature, pressure, entropy, and heat capacity. From a thermodynamics viewpoint of entropy, we will not consider the microscopic details of a system. It was introduced by a German physicist named Rudolf Claudius in the year 1850. Generally, the definition of entropy is as a measure of randomness or disorder of a system. Consider a data set having a total number of N classes, then the entropy (E) can be determined with the formula below: Where P i Probability of randomly selecting an example in class I Entropy always lies between 0 and 1, however depending on the number of classes in the dataset, it can be greater than 1. 1.4 Solved examples: Entropy Formula What is Entropy?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed